How PubNub Can Turbocharge Your Machine Learning Algorithm

Let's take a look at not just what machine learning is, but the various tradeoffs engineers must make in devising, developing, and improving effective and useful machine learning algorithms. We'll also discuss how many of today’s challenges with machine learning can be circumvented through implementing PubNub into the network's design.

What is Machine Learning?

Machine learning is the intersection of computer science and statistical analysis. Through extracting and manipulating data from the world around us, machine learning engineers use mathematical algorithms to make statistical inferences about future events of the data given to them.

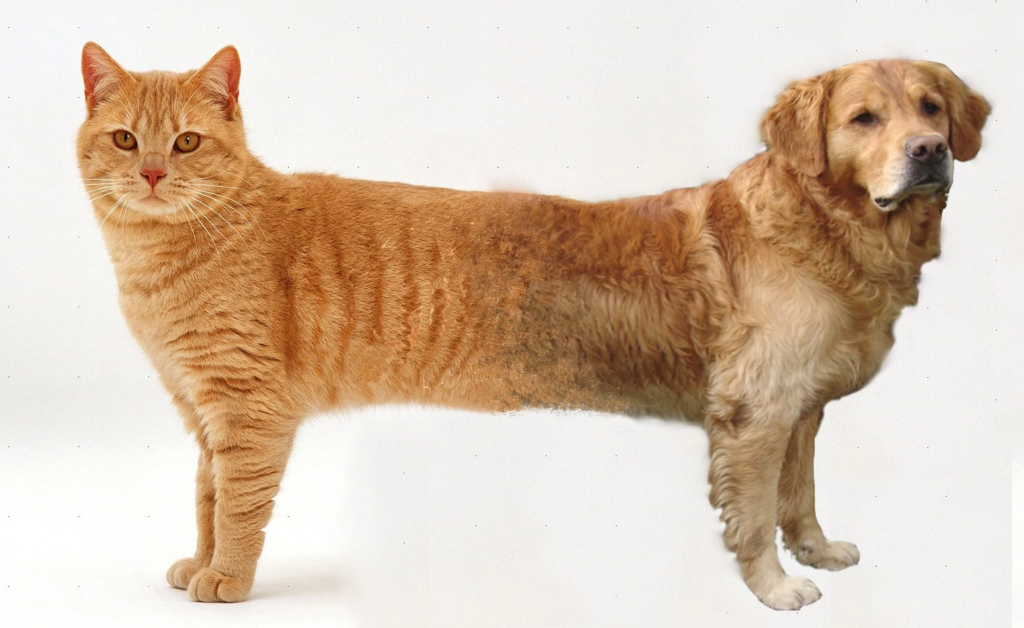

To illustrate, consider the Hello World of AI that is the cat/dog sorting algorithm. Given a set of random cat and dog pictures, a primitive algorithm has a 50% chance (50% error rate) of guessing if the following image is a cat or a dog:

However, the engineer can minimize this error to near-zero by telling the algorithm which images are correct. Thus, the algorithm can generate a set of weights (or biases) to help it better guess the next image. The more images (or data) the algorithm is fed, the better it can improve its weights and accurately infer the next animal.

The key importance exemplified here (or any machine learning algorithm) is data. The amount, quality and distribution of data are the three keystone factors in developing good artificial intelligence. Here is a step by step procedure exemplifying how data traverses the machine learning realm.

IoT and Machine Learning

Following this decade’s boom in IoT development, companies have invested hundreds of millions of dollars in melding machine learning into IoT design. From autonomous vehicles to facial recognition software, IoT machine learning examples can be found just about everywhere.

As a network of millions of IoT devices can collect magnanimous amounts of data from numerous locations, machine learning algorithms can flourish on the steady stream of big data: creating patterns and biases from the given data to provide predictive inferences on the data set.

Unfortunately, many of the foundations these technologies are built from are flawed and inefficient; so much so in fact, that many smaller companies struggle to jump into this market as they cannot afford to waste limited resources on inefficient, precedented designs.

Below I will elaborate on these challenges and explain why PubNub can serve as a viable solution.

PubNub as a Machine Learning Solution

Collection Cost and Efficiency

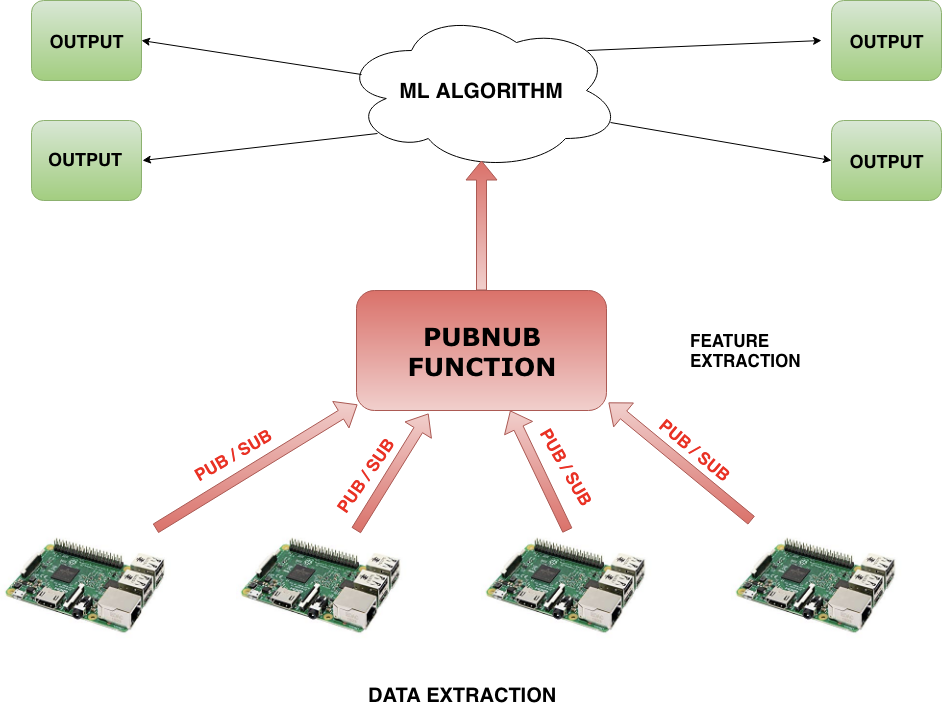

Perhaps the most expensive bottleneck in IoT machine learning design fundamentally resides in data collection. As millions of IoT devices are distributed across a network, a machine learning algorithm must not only require large databases, but also costly computing servers to process the data for the algorithm to be able to read.

Consider a driverless car company who aims to better its autopilot algorithm by learning from driving analytics from a network of IoT-sensored vehicles. The company can either manually deposit the data of each car at the end of its ride at the main facility, or can transmit the data wirelessly over a network to the main server. In both cases, the data for each car must later be sorted, labeled, and processed before arriving at the main machine learning algorithm.

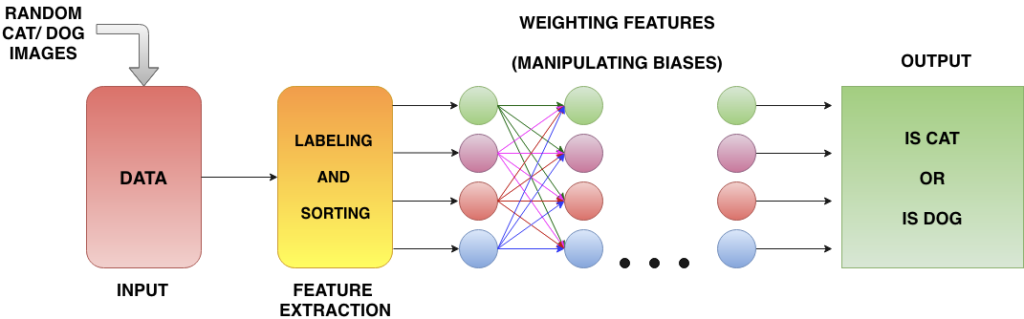

PubNub serves as the most cost-effective and efficient solution in any case by improving three main areas of friction: Data extraction, Feature Extraction, and Portability.

Data Extractions

The PubNub publish/subscribe model allows any IoT device to transmit and receive messages from anywhere in the world in real time. For example, a driverless car network can easily be set up to enable each individual car to transmit driving analytics instantly over separate or shared channels.

Not only does this relinquish the need for investing large frontend costs for reliably fast web-servers, but also allows the network to follow a pay-as-you-grow business model. Any car can be added or deleted from the machine learning algorithms data network by managing channels, which scales the data flow’s cost linearly and continuously, instead of discretely through every new purchase of a new server.

In only a few moments, a consumer driverless car will have learned from the experiences of the millions that preceded it, for the same price it conventionally would have been for a few thousand.

Learn more about PubNub’s pay-as-you-grow design implementation in this article on how PubNub is a Valuable Skill for the Developer’s ToolBox.

Feature Extractions

Another major challenge with machine learning algorithms is found in the procedure proceeding the data’s extraction: sorting and labeling data for the algorithm’s readability (extracting the data’s features). This task can entail labeling which device the data came from, discarding anomalous data points, and/or sorting the data to a specific pattern group.

This process can either be done in the cloud or on a localized server, however, either of these options requires large and expensive computing power. Additionally, a tradeoff must always be made between the strength of the device and the strength of the feature extraction server, for example, if one wishes to conserve feature computing resources, one needs to invest more into the pre-processing power of the device and vice versa.

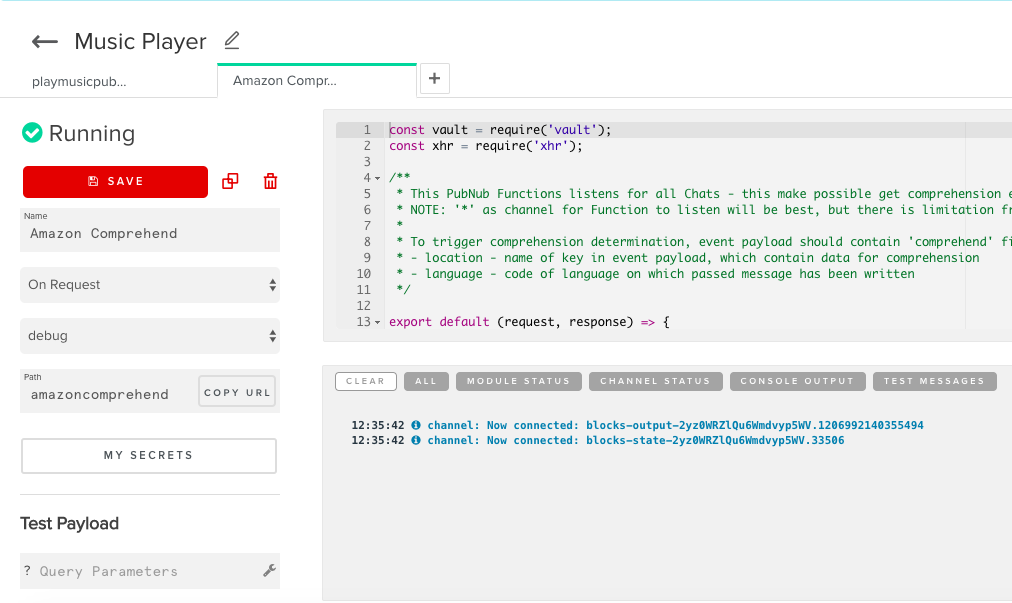

However, PubNub includes powerful tools to help mitigate the cost from both of these ends. Functions allows users to have a block of JavaScript code execute alongside every message fired upon a channel. When used in tandem with PubNub’s numerous APIs, data can be easily processed, visualized, and ported to other neural networks.

It is here where the engineer can program a Function to sort and label the data as leaves the IoT device and before it reaches the machine learning algorithm, for no added cost. Tools such as PubNub EON can easily visualize data streams as they come in from the IoT device. Data can also be ported from one neural network to the next by simply managing the data valves of Pub/Sub channels.

By removing some of the computational power needed to perform feature extractions, the IoT devices are allowed to be weaker and cheaper as well as removing strain from the engineer’s servers. The algorithm can perform its duties with lower latencies, the IoT devices can be cheaper and more plentiful, and cloud space can be greatly reduced.

Check out this article on our blog for more information on how PubNub is integral to Cloud State Machines and the Future of IoT.

Security

Lastly, incorporating PubNub into machine learning design provides a solution to one of the most crippling issues of consumer machine learning algorithms: security.

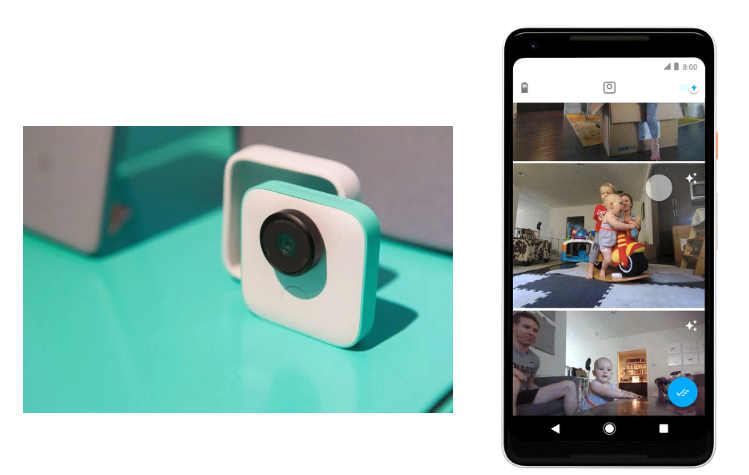

Google Clip, a consumer product developed by Google, combines machine learning and a video camera to produce a stationary camcorder that records moments the algorithm believes you value most important. By gradually taking user feedback and pre-built biases, the video camera can be set up in a living room and record note-worthy moments such as birthday parties and various other Kodak moments.

However, due to major user concern over Google storing people’s data and continuously watching them at all times of the day, the Google Clip was designed to have its machine learning capabilities solely exist in the camera itself, without depositing any data to a Google cloud server. This required the Google Clip to be more expensive in its marketability and manufacturability as the hardware needed to be upgraded to compensate.

If PubNub is embedded into the network framework, all messages across a data network are guaranteed confidentiality. Data extracted from a video camera would never bare any risk of falling into the hands of any major or 3rd party organization. Therefore, consumer hardware under PubNub would not require any localized machine learning hardware, making the product cheaper for both the producer and the consumer.

Learn more about PubNub's Compliance and Encryption Methods here.