Real-time Image Recognition, Facial Detection with AIception

Developers and consumers, get ready! The world of Artificial Intelligence (AI) is exploding. As the amount of compute power available to developers increases and the underlying technology of AI advances in leaps and bounds, we are seeing more and more applications of artificial intelligence in daily life.

Enter AIception, a new platform for building image recognition into real-time applications. With AIception, it's possible to give your application “eyes” to detect features in images as they arrive and analyze them in a number of different ways.

In this article, we dive into a simple example of how to build image recognition and facial detection functionality into a real-time Angular 2 web application with a modest 22-line PubNub JavaScript BLOCK and 93 lines of HTML and JavaScript. Our application will take an image URL, process it through AIception, and return possible indentifications of the image.

Image Recognition Overview

So, what exactly are these image recognition services we speak of? AIception offers a number of services for detecting objects, estimating age of human face images, detecting adult content and more.

In this article, Image Recognition refers to processing images to produce a list of features and associated scores for accuracy. There are many considerations when analyzing images, such as accuracy, domain-specific features, multiple objects in complex configurations, overtraining, bandwidth, and performance.

As we prepare to explore our sample Angular 2 web application with image recognition, let's check out the underlying AIception API.

AIception API

Image recognition and feature detection is a notoriously difficult area, and it requires substantial effort and engineering resources to maintain high availability and high efficiency in a system of this magnitude. AIception provides a solution to these issues with a friendly UI and easy-to-use API that “just works.” Through the API and user interface, it is possible to detect ages of human facial images, detect objects in images, and much more!

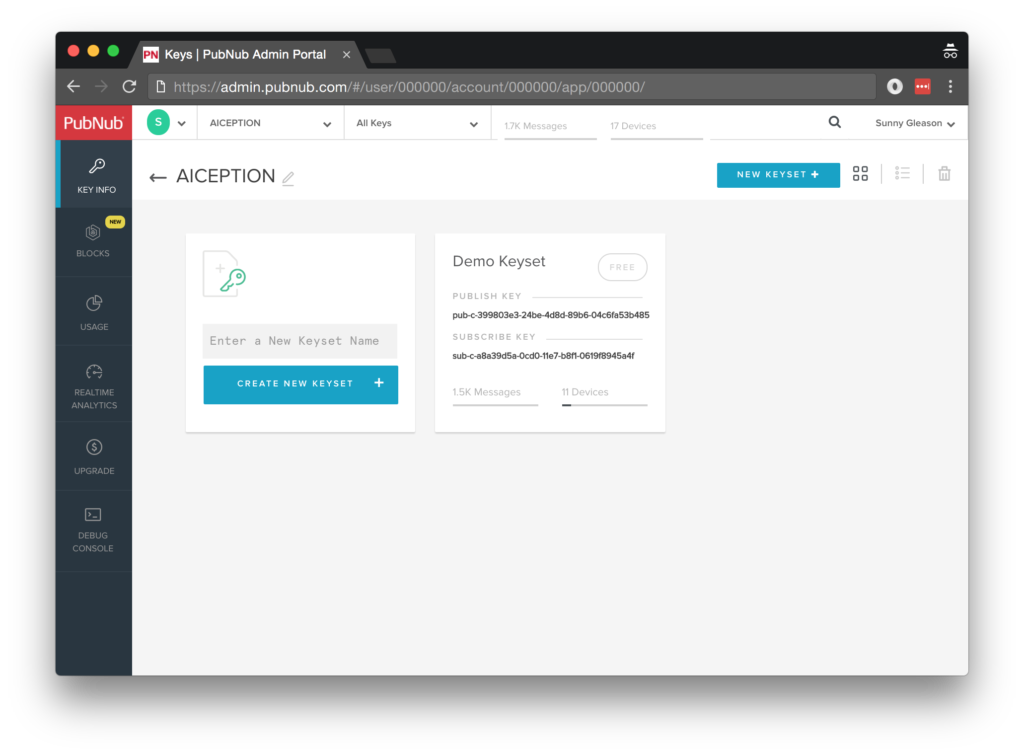

Obtaining your PubNub Developer Keys

You'll first need your PubNub publish/subscribe keys. If you haven't already, sign up for PubNub. Once you've done so, go to the PubNub Admin Portal to get your unique keys. The publish and subscribe keys look like UUIDs and start with “pub-c-” and “sub-c-” prefixes respectively. Keep those handy – you'll need to plug them in when initializing the PubNub object in your HTML5 app below.

About the PubNub JavaScript SDK

PubNub plays together really well with JavaScript because the PubNub JavaScript SDK is extremely robust and has been battle-tested over the years across a huge number of mobile and backend installations. The SDK is currently on its 4th major release, which features a number of improvements such as isomorphic JavaScript, new network components, unified message/presence/status notifiers, and much more.

NOTE: In this article, we use the PubNub Angular 2 SDK, so our UI code can use the PubNub JavaScript v4 API syntax!

The PubNub JavaScript SDK is distributed via Bower or the PubNub CDN (for Web) and NPM (for Node), so it's easy to integrate with your application using the native mechanism for your platform. In our case, it's as easy as including the CDN link from a script tag.

That note about API versions bears repeating: the user interfaces in this series of articles use the v4 API (since they use the new Angular2 API, which runs on v4). In the meantime, please stay alert when jumping between different versions of JS code!

Getting Started with AIception

The next thing you'll need to get started with AIception services is an AIception developer account to take advantage of the image processing APIs.

- Go to the AIception signup form on the AIception home page.

- Go to the dashboard and create a new API key (make note of it, you'll need it shortly).

Overall, it's a pretty quick process. And free to get started, which is a bonus!

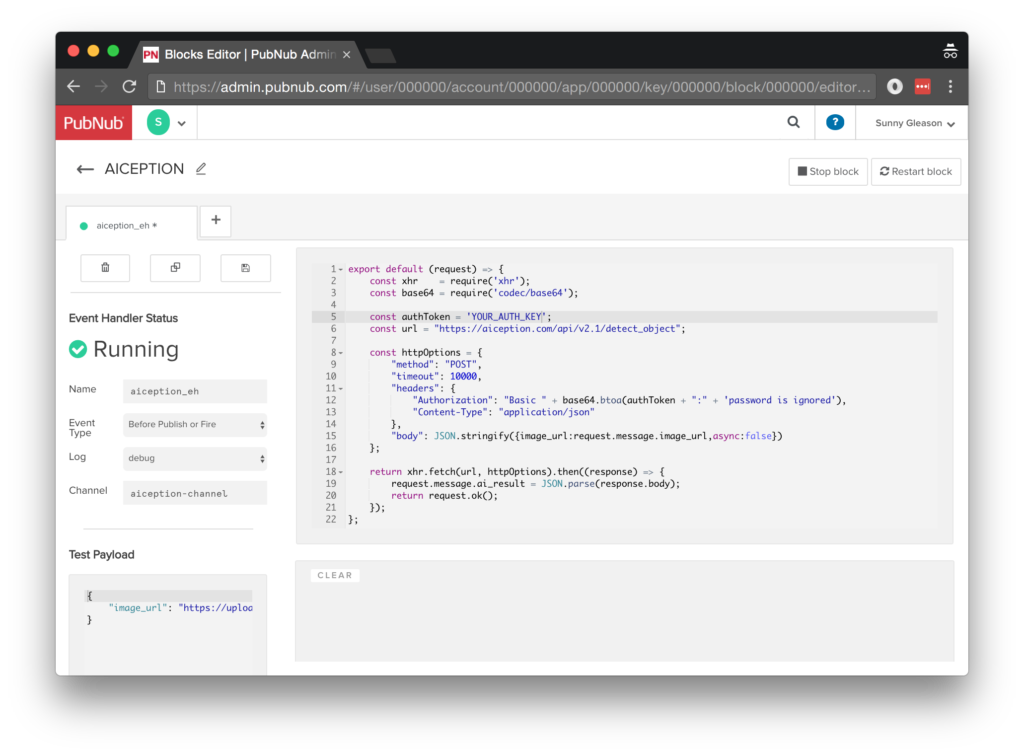

Setting up the BLOCK

With PubNub BLOCKS, it's really easy to create code to run in the network. Here's how to make it happen:

- Go to the application instance on the PubNub Admin Dashboard.

- Create a new BLOCK.

- Paste in the BLOCK code from the next section.

- Start the BLOCK, and test it using the “publish message” button and payload on the left-hand side of the screen (including the Auth Key from the steps above).

That's all it takes to create your serverless code running in the cloud!

Diving into the Code – the BLOCK

You'll want to grab the 22 lines of BLOCK JavaScript and save them to a file called pubnub_aiception_block.js. It's available as a Gist on GitHub for your convenience.

First up, we declare our dependency on xhr and base64 (for HTTP requests and Basic Auth headers) and create a function to handle incoming messages.

export default (request) => {

const xhr = require('xhr');

const base64 = require('codec/base64');

Next, we set up variables for accessing the service (the API credentials and base URL).

const authToken = 'YOUR_AUTH_TOKEN';

const url = "https://aiception.com/api/v2.1/detect_object";

Next, we set up the HTTP params for the object detection API call. We use a POST request for the URL.

We use the String content of the image_url message attribute as the request parameter, and set the authentication headers. Since object detection can be a potentially long-running call, we set the API async parameter to false and increase the BLOCK XHR timeout to 10000ms (the current BLOCKS maximum; if the API takes longer than this amount of time we'll see errors).

const httpOptions = {

"method": "POST",

"timeout": 10000,

"headers": {

"Authorization": "Basic " + base64.btoa(authToken + ":" + 'password is ignored'),

"Content-Type": "application/json"

},

"body": JSON.stringify({image_url:request.message.image_url,async:false})

};

Finally, we POST the given data and decorate the message with an ai_result attribute containing the results of the API call. Pretty easy!

return xhr.fetch(url, httpOptions).then((response) => {

request.message.ai_result = JSON.parse(response.body);

return request.ok();

});

};

All in all, it doesn't take a lot of code to add object recognition to our application. We like that!

OK, let's move on to the UI!

Diving into the Code – the User Interface

You'll want to grab these 93 lines of HTML & JavaScript and save them to a file called pubnub_aiception_ui.html.

The first thing you should do after saving the code is to replace two values in the JavaScript:

- YOUR_PUB_KEY: with the PubNub publish key mentioned above.

- YOUR_SUB_KEY: with the PubNub subscribe key mentioned above.

If you don't, the UI will not be able to communicate with anything and probably clutter your console log with entirely too many errors.

For your convenience, this code is also available as a Gist on GitHub, and a Codepen as well. Enjoy!

Dependencies

First up, we have the JavaScript code & CSS dependencies of our application.

<!DOCTYPE html>

<html>

<head>

<title>Angular 2</title>

<script src="https://unpkg.com/core-js@2.4.1/client/shim.min.js"></script>

<script src="https://unpkg.com/zone.js@0.7.2/dist/zone.js"></script>

<script src="https://unpkg.com/reflect-metadata@0.1.9/Reflect.js"></script>

<script src="https://unpkg.com/rxjs@5.0.1/bundles/Rx.js"></script>

<script src="https://unpkg.com/@angular/core/bundles/core.umd.js"></script>

<script src="https://unpkg.com/@angular/common/bundles/common.umd.js"></script>

<script src="https://unpkg.com/@angular/compiler/bundles/compiler.umd.js"></script>

<script src="https://unpkg.com/@angular/platform-browser/bundles/platform-browser.umd.js"></script>

<script src="https://unpkg.com/@angular/forms/bundles/forms.umd.js"></script>

<script src="https://unpkg.com/@angular/platform-browser-dynamic/bundles/platform-browser-dynamic.umd.js"></script>

<script src="https://unpkg.com/pubnub@4.3.3/dist/web/pubnub.js"></script>

<script src="https://unpkg.com/pubnub-angular2@1.0.0-beta.8/dist/pubnub-angular2.js"></script>

<link rel="stylesheet" href="https://maxcdn.bootstrapcdn.com/bootstrap/3.3.7/css/bootstrap.min.css" />

<link rel="stylesheet" href="https://maxcdn.bootstrapcdn.com/bootstrap/3.3.7/css/bootstrap-theme.min.css" />

</head>

For folks who have done front-end implementation with Angular2 before, these should be the usual suspects:

- CoreJS ES6 Shim, Zone.JS, Metadata Reflection, and RxJS : Dependencies of Angular2.

- Angular 2 : core, common, compiler, platform-browser, forms, and dynamic platform browser modules.

- PubNub JavaScript client: to connect to our data stream integration channel.

- PubNub Angular 2 JavaScript client: provides PubNub services in Angular2 quite nicely indeed.

In addition, we bring in the CSS features:

- Bootstrap: in this app, we use it just for vanilla UI presentation.

Overall, we were pretty pleased that we could build a nifty UI with so few dependencies. And with that… on to the UI!

The User Interface

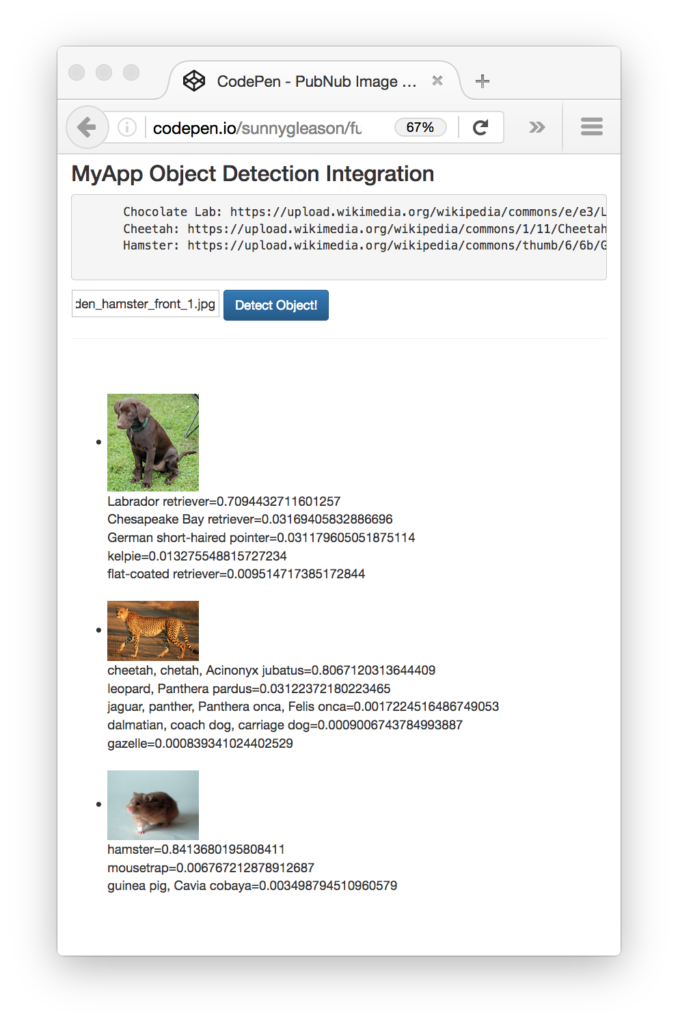

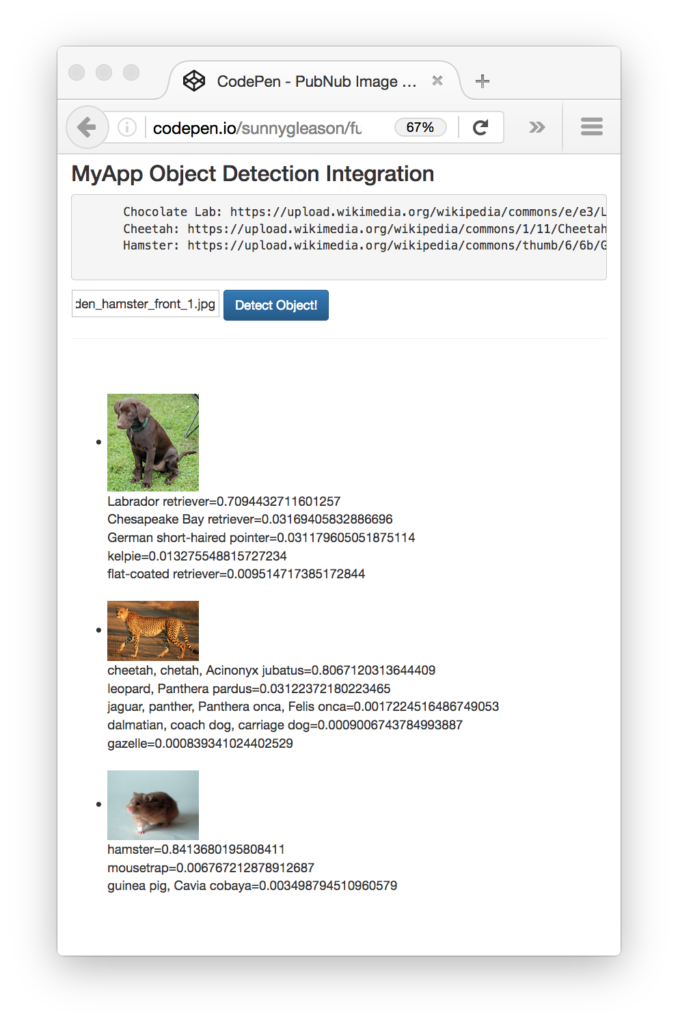

Here's what we intend the UI to look like:

The UI is pretty straightforward – everything is inside a main-component tag that is managed by a single component that we'll set up in the Angular2 code.

<body>

<main-component>

Loading...

</main-component>

Let's skip forward and show that Angular2 component template. We provide a text box for the image URL and a simple button to perform the publish() action to send a request to be processed by the BLOCK.

<div class="container">

<pre>

NOTE: make sure to update the PubNub keys below with your keys,

and ensure that the BLOCK settings are configured properly!

</pre>

<h3>MyApp Object Detection Integration</h3>

<pre>

Chocolate Lab: https://upload.wikimedia.org/wikipedia/commons/e/e3/Labrador_Retriever_(Chocolate_Puppy).jpg

Cheetah: https://upload.wikimedia.org/wikipedia/commons/1/11/Cheetah_Kruger.jpg

Hamster: https://upload.wikimedia.org/wikipedia/commons/thumb/6/6b/Golden_hamster_front_1.jpg/1200px-Golden_hamster_front_1.jpg

</pre>

<input type="text" [(ngModel)]="toSend" placeholder="image url" />

<button class="btn btn-primary" (click)="publish()">Detect Object!</button>

<hr/>

<br/>

<br/>

<ul>

<li *ngFor="let item of messages.slice()">

<img [src]="item.message.image_url" width="100" />

<div *ngFor="let answer of item.message.ai_result.answer">{{answer[0]}}={{answer[1]}}</div>

</li>

</ul>

</div>

The component UI consists of a simple list of events (in our case, the object recognition results). We iterate over the messages in the component scope using a trusty ngFor. Each message includes the data from the AIception API.

And that's it – a functioning real-time UI in just a handful of code (thanks, Angular 2)!

The Angular 2 Code

Right on! Now we're ready to dive into the Angular2 code. It's not a ton of JavaScript, so this should hopefully be pretty straightforward.

The first lines we encounter set up our application (with a necessary dependency on the PubNub AngularJS service) and a single component (which we dub main-component).

<script>

var app = window.app = {};

app.main_component = ng.core.Component({

selector: 'main-component',

template: `...see previous...`

The component has a constructor that takes care of initializing the PubNub service and configuring the channel name, auth key, and initial value. NOTE: make sure the channel matches the channel specified by your BLOCK configuration and the BLOCK itself!

}).Class({

constructor: [PubNubAngular, function(pubnubService){

var self = this;

self.pubnubService = pubnubService;

self.channelName = 'aiception-channel';

self.toSend = "";

Early on, we initialize the pubnubService with our credentials.

pubnubService.init({

publishKey: 'YOUR_PUB_KEY',

subscribeKey: 'YOUR_SUB_KEY',

ssl:true

});

We subscribe to the relevant channel, create a dynamic attribute for the messages collection, and configure a blank event handler since the messages are presented unchanged from the incoming channel.

pubnubService.subscribe({channels: [self.channelName], triggerEvents: true});

self.messages = pubnubService.getMessage(this.channelName,function(msg){

// no handler necessary, dynamic collection of msg objects

});

}],

We also create a publish() event handler that performs the action of publishing the new message to the PubNub channel.

publish: function(){

this.pubnubService.publish({channel: this.channelName,message:{image_url:this.toSend}});

}

});

Now that we have a new component, we can create a main module for the Angular2 app that uses it. This is pretty standard boilerplate that configures dependencies on the Browser and Forms modules and the PubNubAngular service.

app.main_module = ng.core.NgModule({

imports: [ng.platformBrowser.BrowserModule, ng.forms.FormsModule],

declarations: [app.main_component],

providers: [PubNubAngular],

bootstrap: [app.main_component]

}).Class({

constructor: function(){}

});

Finally, we bind the application bootstrap initialization to the browser DOM content loaded event.

document.addEventListener('DOMContentLoaded', function(){

ng.platformBrowserDynamic.platformBrowserDynamic().bootstrapModule(app.main_module);

});

We mustn't forget close out the HTML tags accordingly.

}); </script> </body> </html>

Not too shabby for about 93 lines of HTML & JavaScript!

Additional Features

There are a couple other features worth mentioning in the AIception service APIs.

- Face Age: detect age of human face images.

- Detect Object: the object detection API we've used here.

- Adult Content: screening out adult image content.

All in all, we found it pretty easy to get started with image recognition features using the API, and we look forward to using more of the deeper integration features!

Conclusion

Thank you so much for joining us in the image recognition article of our BLOCKS and web services series! Hopefully it's been a useful experience learning about image-aware technologies. In future articles, we'll dive deeper into additional web service APIs and use cases for other nifty services in real time web applications.

Stay tuned, and please reach out anytime if you feel especially inspired or need any help!