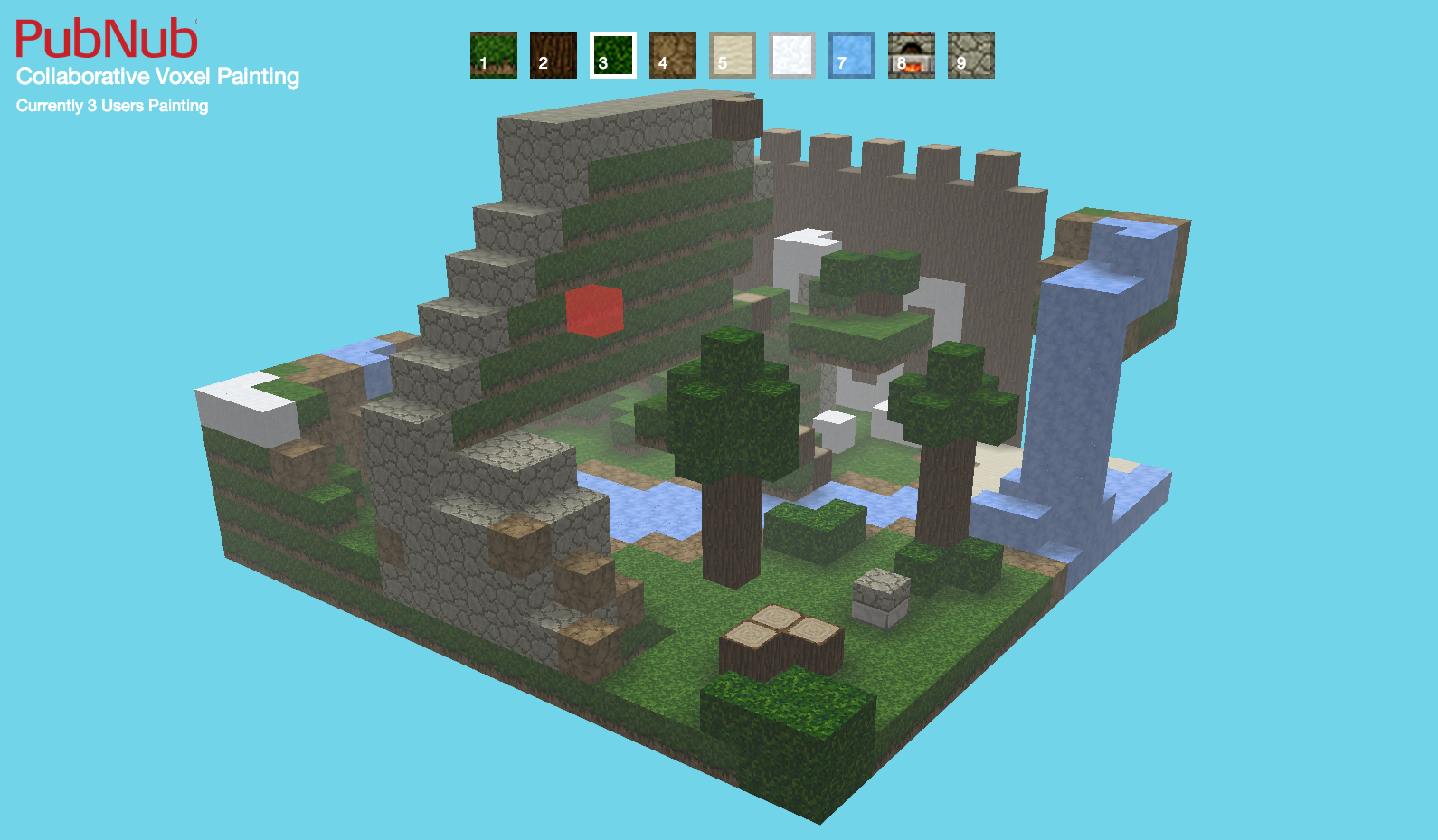

Making Interactive WebGL Applications: StackHack 2.0

We've updated our SDKs, and this code is now deprecated.

Good news is we've written a comprehensive guide to building a multiplayer game. Check it out!

Want to see it in action? Check out the live demo and Github Repository below:

Using WebGL Raycasting to Create Interaction

You’ll first need to sign up for a PubNub account. Once you sign up, you can get your unique PubNub keys in the PubNub Developer Portal. Once you have, clone the GitHub repository, and enter your unique PubNub keys on the PubNub initialization, for example:

Interaction on the web usually occurs when the user clicks their mouse on the screen. This means there needs to be some way to take the 2d coordinate where the user clicks on the screen and translate it to a 3d environment. This is done in three.js with a technique called raycasting.

Raycasting is a technique that starts at a position and draws a line in a specified direction until it collides with an object. If you start at the position of the user’s camera and draw in the direction of where you clicked your mouse, you would get what the user clicked on the screen. In StackHack, a raycaster is updated every time the user moves their mouse and used to find an object when the user clicks:

When the user clicks, the code starts by detecting if you clicked on an actual block on the screen. Since the user sees a merged mesh, removing a block means it has to use a hidden separated mesh to determine which block to actually remove. This is a little too complex for this post, but more information can be found on optimizing render performance with geometry merging.

WebGL Multiplayer Interaction

This will allow the user to create a world on his own screen entirely by himself. What if we wanted to have more than one user collaborate on a scene though? This is where PubNub Data Stream can help out.

We want to enable two users to interact with one another in the same world. The simplest way of doing this would be to send the entire state of the world to every other user when you add or remove a block. Unfortunately this would make the game sluggish, and would hinder the fluid real-time user experience. If all we want to know is the difference between my environment and someone who has interacted with their environment, we can just send an add or remove command every time the user clicks on the screen.

With PubNub, we can publish messages to a channel that everyone listens to. This means everyone can interact within the application, playing in the same instance. Whenever the user adds or removes a block from the screen, we simply publish that out to a common channel where everyone will get the message of what happened:

This will allow everyone viewing the application to interact with each other in real time!

Multi-User Applications Made Easy

The power of PubNub Data Streams technology helps remove the complexity of building multi-user applications. With PubNub, collaborating with a million users becomes a simple publish and subscribe rather than setting up servers, dealing with protocols, and solving load balancing issues. This allowed us to take a simple voxel painting example and turn it into a collaborative environment.

Get Started

Sign up for free and use PubNub to power WebGL apps