How We Sped Up Eventually -Consistent Databases Global Syncs

TL;DR: Our average case for global consistency was 1 second. By adding a volatile cache we improved our data acquisition rate to 1/10 of a second or less, (or the promise delivery time of our regular PubSub service).

Abstract

In a globally distributed system, one of the biggest problems we face is having a globally accessible source of truth when updates are coming asynchronously from all over the world. Thankfully this is a problem that is already solved with solutions like CassandraDB and its “eventual consistency.”

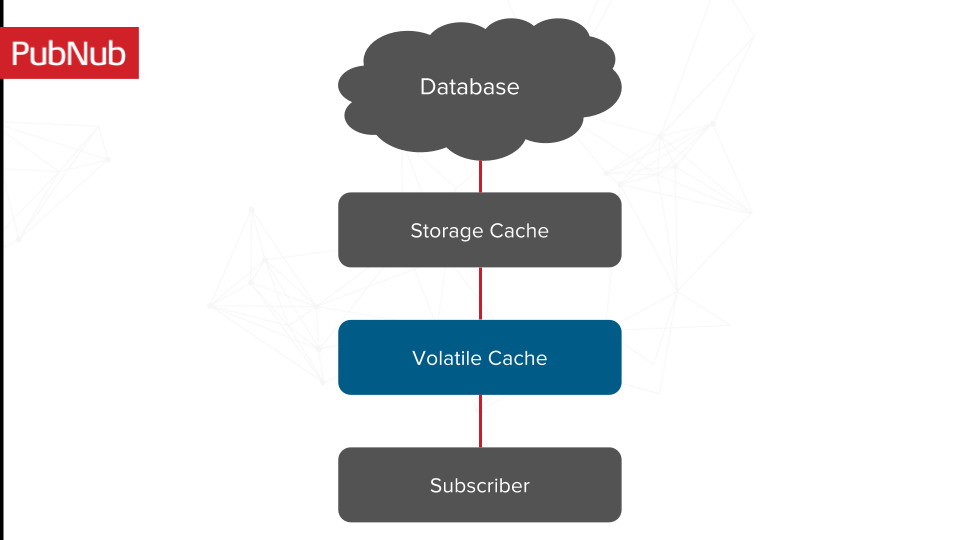

However, we still find ourselves hitting a wall when we have data that we need to act faster than the CassandraDB becomes consistent. In order to solve this problem we have implemented caching on two sides, one cache that lives in between the CassandraDB and our request server and one “volatile cache” which is updated upon unverified (not yet updated in the Cassandra database) user actions so we have a faster response time.

Terms

I'll be referencing a number of terms throughout this article, so it is useful that I define them with respect to our system.

Subscriber: The machine that maintains a record of the last N messages for every account that is its shard group (and recently made a request to this particular machine) and its channels.

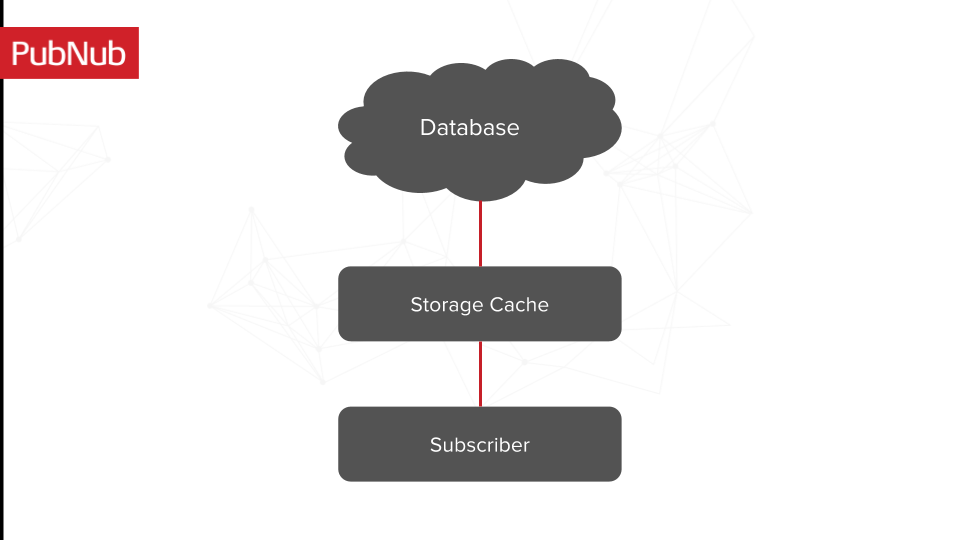

Storage Cache: The cache that sits above our database so that we don’t get stalled by storage read times for account records that we are frequently accessing.

Channel-group: As the name suggests, it is a group of channels. Instead of subscribing to channel, A,B,C you subscribe to channel-group “ABC” whose contents are A,B,C.

CassandraDB : This is the database that we use to maintain all of our user data/channel group. (If it's not on the subscriber cache, this is where it would most likely be).

Source of Truth: We have global write updates happening in an asynchronous way, and there is never a guarantee that we 100% have the right answer, but we treat our database as the source of truth due to the eventual consistency promise they provide.

Volatile Cache: I am not using the traditional term for a “volatile cache”, my meaning for volatile in this stage is meant more to highlight that the information we have in this cache may not be the most reliable and can be replaced after an age out period from our “source of truth.”

Introduction

Our architecture utilizes CassandraDB to store all of the channel groups, and our subscribers (upon getting a notification that a change has occurred) poll from our database to update themselves with the latest channel groups (all the channels that have been added and removed).

The nature of the eventual consistent-ness of this database made it such that adds and removes could potentially not propagate for seconds at a time. This was problematic for customers who were making large changes to channel groups on a frequent interval and would have missed messages due to the relatively “slow” update period. “Slow” in our case is 1 second while our customer expects 1/10 of a second (the rate of our Pub/Sub).

Work

Current Architecture

Before I get into the unique optimization we made, I wanted to quickly describe the storage cache and its role in our ecosystem.

The eventual consistency update time is one problem, but another major problem ends up being our read time from the database itself. Read times from a memory store (no matter how well optimized) are expensive, and nowhere near as good as an in-memory cache.

For this reason, we have a layer called the storage cache that sits between our database and our upstream (I will refer to the subscriber as the client since we are the ones depending on the information coming from the database). This storage cache functions as an LRU, we maintain the records of all the most recent channel groups associated with an account record and send that back upon requests from our client. We update the storage cache asynchronously as long as the account record exists (meaning it has not aged out). Once the entry has aged, the record falls off the storage cache and the next read for that entry takes the very large time a read from the database would take.

This was a great optimization, it reduced our read times drastically and because the data itself was small we were hitting our cache near 100%.

New Architecture

Our new architecture addresses the problems we were facing of having to wait for the database information to propagate its way downstream. Recall from our problem statement that the issue was customers making changes that didn’t take effect for up to seconds after the change had been made. This posed a problem for us when we strive for making all of our actions as real time as possible for our customer.

The solution we came up with was to maintain a “volatile” cache of all of the channel group change information (the adds and the removes) on any given action. Upon sending that data up to our database, we also updated our volatile cache with this information.

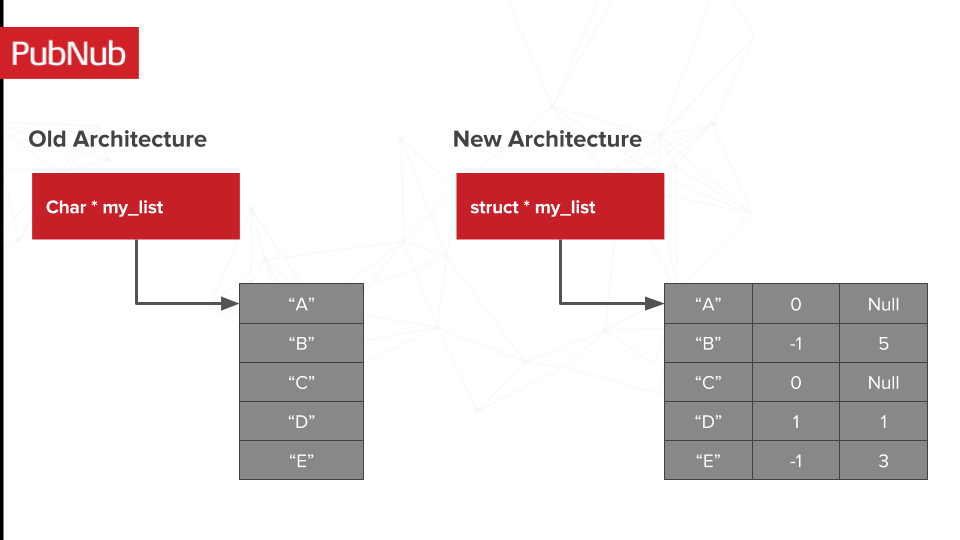

In order to maintain some semblance of consistency we maintained a timestamp of when the latest change occurred. The time stamp indicated when an “add” or “remove” action had taken place so we had a record of the most recent action. In order to represent this in a sane way, we had to update our cache from being a standard list of channels (which is what it used to be, a list we got from our storage cache) to instead be a list of channels with a timestamp and a status (add, or remove).

So in our old architecture a call to the channel group structure would return a pointer to a list of channels. Our new architecture would return a pointer to an object that contained an entry for a channel, the action that has been taken on it (as made by a request but not yet updated with our DB) and the time this action took place.

The channel name is still the same, the second field represents the state of the channel. “1” to indicate that an add action has happened (coming from REST request) and “-1” to indicate that a remove action has happened (also from our REST). The third state of “0” which has a corresponding time token of NULL represents that this particular channel name came from our source of truth (CassandraDB) and does not need to be aged.

Otherwise, the third field is represented by the timetoken that this channel was updated by on a restful request to this service, and before it has become eventually consistent in our CassandraDB. Each time we access this channel group, we check against the current time token and see if any of the entries that represent adds or removes have “aged” out. If they are aged, we remove them from our data structure and we do not populate our return channel list without those modifications.

So in our case if our age out time was 4 seconds, and we started everything at time 0, we would delete the “D” add action made at time 1. The 4 second age out time is a tuneable number we can choose based on how long we think Cassandra takes to become eventually consistent. Since we still take Cassandra to be the source of truth, if we determine that a change has not propagated out to our DB within the ageout time we have decided, we throw it out.

Known Issues

Although this does solve our latency issue, it also makes our customer channel group records more error prone. For example, if a customer added “A” and removed “B” at time 1 in CA and added “B” at the same time in VA, we have ambiguity on what state we actually want to represent. In order to assuage this problem we have made our TTL a reasonably small value (so none of the potential inconsistencies live for very long). We have also made this a feature flag only turning it on for customers who tend to face this high frequency problem.

Part of the reason I call this a volatile cache is because the linearity of information is called into question by the fact that there is no promise that all the clocks on our machines globally are in sync. We are using NTP in getting as close as we can to a global time sync and combating bad data by treating our cache as an LRU.

Conclusion

In adding a volatile cache we took what ended up being a major delay (upwards of seconds) for customers making large channel group changes at high frequency to propagate, down to 1/10 of a second (or the promise delivery time of our regular PubSub service). Choosing to make this a feature flag was one way of helping to curb the potential for out of memory issues with all the new caching, as well as reducing impact for customers who are not affected already by this latency issue.

The second design choice of making this an LRU with a configurable age out time was done to help reduce dependency on the volatility of the cache (from factors such as clock skew or two competing actions happening at the same time in different regions causing a race) while still leaving our database as our ultimate source of truth (rather than trying to reinvent the distributed database system.)